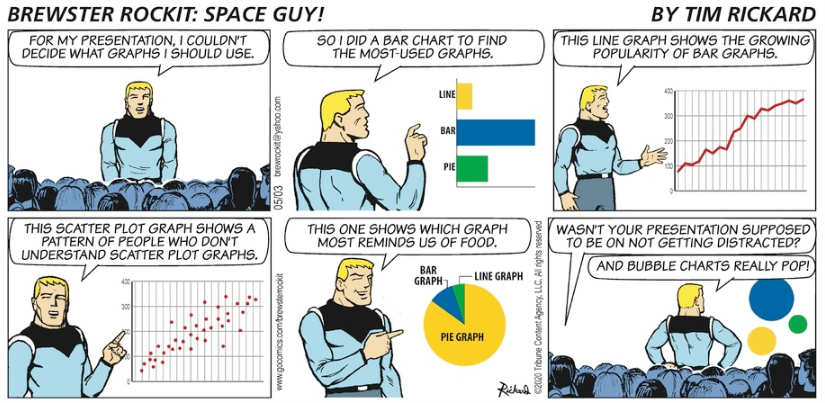

Can different statistics about the same topic support different arguments? If looking at gun violence, here is one conclusion based on rates:

In reality, the region the Big Apple comprises most of is far and away the safest part of the U.S. mainland when it comes to gun violence, while the regions Florida and Texas belong to have per capita firearm death rates (homicides and suicides) three to four times higher than New York’s. On a regional basis it’s the southern swath of the country — in cities and rural areas alike — where the rate of deadly gun violence is most acute, regions where Republicans have dominated state governments for decades.

Similarly, a 2022 brief posted by Drexel’s Urban Health Collaborative shows big differences in per capita gun deaths in major American cities with New York at the bottom of the listed cities.

But, the data could be interpreted in another way. Rates are expressed in the number of occurrences per a set amount of population. What do the absolute numbers say about gun deaths? One compilation of data from The University of Sydney shows 804 gun deaths in 2019.

Or, here is a 2022 article in the New York Times looking at shootings in the city:

Shootings are twice as high as in the years preceding the pandemic, and the burden falls primarily on Black and Latino neighborhoods. More than 1,800 shootings were reported annually in the past two years after dropping under 900 in 2018.

The absolute numbers sound high and can contribute to perceptions:

But fresh anxieties have driven warnings about a return to New York’s “bad old days,” when there were many years with more than 2,000 murders. To some, the resemblance between the periods lies not in the crime or the data, but in the coverage.

Rates are often used because they help make comparisons across communities with different population sizes. New York City has more shootings but it is also the largest city in the United States by a lot. There will be more crimes to possibly report on in a larger city but that is in part because of having a larger population.

Of course, if we are at a point where people just want to find a statistical interpretation that fits their perspective, we have bigger problems on our hands than simply discussing what numbers best reflect realities.

(See this earlier post involving rates on whether Chicago is the “murder capital” of the United States.)